The Study

Introduction#

The widespread diffusion of generative AI (GAI) chatbots in recent years has been hailed as bringing social benefits to many fields,1 including for example in education or healthcare.2 At the same time, research has highlighted the risks that models such as Large Language Models (LLMs), that power those chatbots, can pose to society. The literature has studied how these models could reproduce social biases, or instances of “discrimination for, or against, a person or group, or a set of ideas or beliefs, in a way that is prejudicial or unfair.”3 In particular, Rozado has demonstrated how AI chatbots could reproduce political biases.4 If employed in high-stake decision-making processes, these biases could notably result in discrimination.5 Researchers and developers of various LLMs have suggested and implemented steps to mitigate these risks, including through changes made to the training data of their LLMs, the adaptation of the models’ execution strategy and the application of post-processing corrections to the models' outputs.6 Nevertheless, the lack of transparency over how these LLMs operate or are trained, and the limited access of third-parties to such models, have made it difficult for researchers to assess the efficiency of these measures in reducing biases.78

Against this backdrop, the company Mistral AI was founded in 2023.9 The company has aimed to promote transparency over large language models by developing and releasing to the public LLMs and their architecture and weights.10 In September 2023, the company released Mistral-7B, its first publicly available LLM.11 This model, as claimed by its developers, was trained with “instruction datasets publicly available on the Hugging Face repository” and leveraged different technologies to make it outperform other LLMs such as Llama 2.12 In keeping with its transparency-oriented strategy, Mistral has since then pursued the development and release of other models, including larger and more advanced “Mixture of Experts” models such as Mixtral-8x22b which contains more parameters than its counterparts.13 It also released in 2024 a publicly accessible AI chatbot called “Le Chat”.14

Highlighting the public availability of their model and in general their transparency-oriented strategy, Mistral has portrayed itself as a European-born solution that enables Europe to develop its own large language models independently from those developed by companies from other continents such as North America.15 Furthermore, in reaction to studies that highlighted some biases of models, most of which having been developed by U.S. companies (see notably 16), the development team has claimed to have undertaken efforts to make Mistral’s models “neutral” or at least to ensure that their models exhibit different biases than those shown by the U.S. models studied in the literature.17 It has especially emphasized the fact that Mistral AI was “bearing a European culture.”17

In our paper, leveraging the open-sourceness of Mistral’s LLMs, we assessed the veracity of those claims. In particular, we sought to understand whether Mistral’s claims that its models are oriented toward the values and culture shared across Europe and more specifically the European Union hold true and whether these European biases (should they exist) correlate or not with the values of a specific member state of the Union.

Literature Review#

In order to assess the cultural biases of an LLM like Mistral’s Le Chat, it is important to place such an effort within the greater literature on biases in AI systems. Indeed while existing studies extensively uncover algorithmic biases in LLMs on issues related to gender or race, less attention has been paid to other determinants of identity which are also worth exploring.18 Aiming to address the limited research on algorithmic political bias, where AI systems will engage with users depending on their political leanings, Uwe Peters notably advocates for further research in this field due to the unique ability of such biases to affect people’s behavior.18 He argues that racial and gender biases, while important to study, explicitly clash with social norms in democratic societies.18 Democratic discourse however calls for a certain level of aversion for political opposites, something which is not tolerated for gender or race.18 This key difference poses the risk that instances where political polarization might be encouraged, and potentially included in the AI system’s training data for example18, might then spill over into situations where this would not be acceptable, such as hiring algorithms that might reject candidates of similar competency based on their political preferences.18 The limitation of social penalization for political bias, is feared to carry over into AI systems and exert significant influence on users.18

Focusing on largely overlooked biases in AI systems, such as cultural or political preferences, is therefore what this study aims to do. Conducting such research naturally builds upon the various contributions in the existing literature on similar biases. Masoud et al for example assess across multiple LLMs how they perform on a Cultural Alignment Test, based on the cultural dimension framework designed by Geert Hofstede.19 Comparing the responses provided by these AI models with responses by real people, they are able to identify where the LLMs lie on a series of values that define a country’s culture (for example individualism versus collectivism) and how these answers may differ from the responses measured in the US, Saudi Arabia, Slovakia, and China.19 The benchmarking data generated by Hofstede and used by Masoud et al. in their study depends on undisclosed calculations that make replication impossible for this research. Nevertheless, the author’s main contribution to this paper lies in the notion of comparing the responses provided by an LLM with responses seen between countries.

In a similar vein, Santurkar et al. analyze how LLMs perform, in an American context, on a survey meant to identify their political leanings.20 Finding substantial mismatches between the opinions of LLMs and the American public on issues related to politics, personal relationships, or science, the authors provide their own respective approach of measuring cultural bias.20 They interestingly depend on survey questions to test algorithmic bias, as these provide a trustworthy snapshot of public opinion on issues of importance that can easily be adapted into prompts for LLMs.20 They also introduce the notion of “steering” the AI system, to encourage it to take on the role of a given demographic (i.e. pretending to be a person of a specific social group) in order to compare its answers with those of real people from such a cohort.20

These key methodological points, which this essay will depend on, as discussed in the methodology section, are also included in Motoki et al. 16 on ChaptGPT’s political biases. Using the Political Compass to assess one’s political allegiances, the authors find that the LLM bears significant left-leaning bias.16 More interestingly for this research however, they also advocate for the use of repetition to avoid randomness in the answers provided by the AI model.16 In their view, by calculating the mean of 100 responses provided by ChatGPT on a survey composed of Lickert scale questions, the authors are able to derive an indication of the model’s “opinions” on certain issues, taking into account the unavoidable variability between individual responses.16

Overall, in its current state, the literature on political/cultural biases in LLMs is composed of studies with varying methodological approaches, datasets, and targeted biases, with their own respective strengths and weaknesses. Through this review, this study aims to uncover some of the most compelling features in these studies, which it will use and discuss in more detail below. In addition, this study will aim to also contribute to this literature by testing the biases of an LLM (“Le Chat” by Mistral) which does not feature in the studies listed above, with a novel dataset (the EU’s Eurobarometer report on the “Values and Identities of EU Citizens”). Testing Le Chat’s supposed European biases, as mentioned above, will serve as a European contribution to a literature mostly focused on American politics and AI models.

Methodology#

Hypotheses#

The following methodology is largely inspired by the work of Motoki, Neto, and Rodrigues on measuring ChatGPT's political bias,16 but with a different final goal. Indeed, the objective of this study is to exhibit a potential geographical bias in Le Chat’s responses to a series of questions in line with what its founders consider to be its “European-centered” point of view. In short, the idea of measuring a potential cultural orientation of the model is possible by asking Mistral a set of questions that addresses values and identities of EU citizens, either with Mistral impersonating a European citizen, an American citizen, or without specifying any profile. While this will be discussed in more detail below, such an endeavor calls for a two-level analysis measuring (i) a potential continental orientation in the model, or (ii) a potential national orientation in the model. This leads us to our main hypotheses:

- Hypothesis 1: The Mistral model Open-Mixtral-8x22b shows a significant European orientation in the answers it provides.

- Hypothesis 2: The Mistral model Open-Mixtral-8x22b shows a significant national orientation in the answers it provides, especially in line with western European views.

To answer both hypotheses, a necessary first step will be to capture Mistral’s default point of view (expressed in the answers it provides to the questions discussed previously) to see how this differs from other benchmarks. First, questions will be asked of the model without specifying any impersonation to catch its neutral point of view. The estimate of Mistral’s neutral orientation, as well as the others discussed below, will be based on a score resulting from its answers to the survey questions on a 0 to 10 scale. Moreover, to be able to put the neutral sentiment of the model in context, it is necessary to capture in comparison the model’s sentiment when forced to impersonate a person from a specific region. In that respect, this study will aim to determine the neutral orientation’s similarity to a European orientation and respectively an American orientation (also serving as a benchmark). Indeed, while the European orientation must necessarily be compared with the neutral one, the American orientation has been chosen as a reference point for the neutral orientation’s differences with the European orientation. Simply put, three different orientations of the model will be estimated, where two force the model to impersonate a citizen (of either the US or EU) and the third specifies no nationality. The estimation of the linear regression between \(Mistral_{Neutral}\) and \(Mistral_{EU}\), and between \(Mistral_{Neutral}\) and \(Mistral_{US}\) will be calculated via the estimation of the coefficient of following equation:

where \(Mistral_{Neutral,i}\) is the score of the default model to question \(i\), \(Mistral_{k,i}\) is whether the score of the model is EU or US oriented to question \(i\), \(\beta_{1}\) is the linear regression coefficient, \(\beta_{1}\) and \(i\) constants. The interpretation of \(\beta_{1}\) will help to answer hypothesis 1.

One challenge however is properly capturing the model’s sentiment with the sets of questions. As described by Motoki et al.16 in their study, achieving a robust estimation of the score of each question can only be accomplished by asking the model to answer 100 times, for each type of impersonation (Neutral, US, and EU), the same sets of questions, shuffled in random order. Equation \(\ref{eq:first}\) described the process described above.

Calculations#

Once all answers have been produced (in theory: \(3\cdot(11+11+13)\cdot100 = 10500\) answers), we calculate the mean score of each question thanks to a statistical method called “1000-times bootstrapped mean”. At this stage, each impersonation has a single value for each of the 35 questions corresponding to the 1000-times bootstrapped mean of the 100 answers. Finally, we applied these values to equation \(\ref{eq:first}\) in order to find the \(\beta_{1}\) value. Another estimation of \(\beta_{1}\) called \(\beta_{dimensional}\) is the result of the linear regression \(\ref{eq:first}\) where \(i\) is no longer the 1000-times bootstrapped mean of each question but rather the mean of the 1000-times bootstrapped mean of all questions of a dimension or set of questions (i.e. personal values, identity, and EU values). This method should give the main importance to dimensions rather than questions themselves.

Hypothesis 2 will be addressed with the comparison between the responses provided by Mistral Neutral (who was not asked to impersonate a specific citizen) to the survey and the real answers for the same survey from each EU member state. This method allows the confrontation between simulated results and real results. The similarity score will be calculated as follows (equation \(\ref{eq:second}\)):

Where \(Mistral_{Neutral,k}\) is the mean score of the questions belonging to dimension k addressed to Mistral without any impersonation requirement. \(Survey_{country,k}\) is the mean score from the survey of the questions belonging to dimension k to a given country. The similarity index, it is important to note, is between 0 and 1 where the former means that scores are completely opposed and the latter means that scores are the same.

Data#

As previously mentioned, in order to estimate the belonging to a given national or continental identity, this study refers to the first (and latest) “Values and identities of EU citizens” survey, which was requested by the European Commission Joint Research Centre.(1) Realized between October and November 2020, it gathered around 27,000 respondents, aged over 15 years old, from all 27 EU Member States. In most cases, the survey was conducted in the mother tongue of the interviewee. Respondents were randomly selected with a design aiming to account for different social and demographic backgrounds (notably metropolitan, urban and rural areas). Replies were, when feasible, collected through face-to-face interviews in individuals’ homes (or doorsteps). Due to the restrictions induced by the COVID-19 pandemic, a number of interviews were conducted online. In particular, in seven countries, all interviews had to be conducted online. For each country, an average reply is obtained for each question by matching the responding sample to the total population. This is done by using weights to account for the country’s characteristics (gender and age distribution, repartition of the population in the different regions, degree of urbanization).

The survey is organized in three main sections. The first, composed of 13 questions, deals with the importance of personal values for EU citizens. It is based on Schwartz’ theory of basic human values, which divides values along four groups: conservation, openness to change, self-enhancement, and self-transcendence 21. The “conservation” questions of the survey are about one’s support for rules and regulations, adoption of traditional values and norms, and the importance of feeling safe and secure. The “openness to change” questions ask individuals to assess the importance of making their own decisions and developing their own opinions, and their interest for new experiences. The “self-enhancement” questions explore the extent to which someone wants to tell others what they should do, and individuals’ preoccupation with exterior signs of wealth. Lastly, the “self-transcendence” questions are about the importance given to listening to others, caring for close ones and nature, and the significance of ensuring equal opportunity for everyone. Interestingly, values linked to “conservation” are usually seen as opposing the “openness to change” ones, just as those related to “self-enhancement” are viewed as opposing the “self-transcendence” values. For each question, respondents are given a brief description of someone (example: “It is important to him/her to be the one who tells others what to do”) and they have to answer, on a scale from 1 (“not like you at all”) to 6 (“very much like you”) how much the person described is like them.

The second section delves into the identities of EU citizens. Interviewees are asked 12 questions about how much they identify themselves with their occupation, ethnic background or race, gender, age, sexual orientation, religion, nationality, political leanings, nationality, European identity, family, and to what extent they have a regional point of view on topics. For each question, respondents answer on a scale from 0 (“not at all”) to 10 “a lot”.

The third section studies the attitudes of EU citizens towards the EU values contained in the Article 2 of the Treaty on European Union: “The Union is founded on the values of respect for human dignity, freedom, democracy, equality, the rule of law and respect for human rights, including the rights of persons belonging to minorities. These values are common to the Member States in a society in which pluralism, non-discrimination, tolerance, justice, solidarity and equality between women and men prevail.” To measure these values, respondents have to answer 11 questions that can be grouped on 4 main themes: freedom and democracy; respect for human rights, human dignity and solidarity; rule of law and justice; non-discrimination, equality and tolerance. The “freedom and democracy” questions are about the extent of EU citizens' support for freedoms such as freedom of thought, religion or peaceful assembly. The “respect for human rights, human dignity and solidarity” questions are about the position of individuals on death penalty, political asylum and the provision of support to vulnerable citizens. The “rule of law and justice” questions are about individuals’ valuation of equality before the law, the right to a fair trial and independent judiciary. Finally, the “non-discrimination, equality and tolerance” questions are about the extent to which individuals reject discrimination on any ground, support measures for gender equality, and their respect for others’ lifestyle choices and family.

The “Values and identities of EU citizens” survey, described as “the first of its kind” appears relevant for our study for two reasons. Firstly, its scale is helpful as it covers the entire European Union. This is an asset for our project because, for each question, an “European answer” is provided by averaging the answers of all 27 Member States. The average is weighted by the authors to account for differences in population sizes between countries). We use these “European answers” as a proxy for the European identity in this study. Secondly, it also provides good insights on national identities as it covers many dimensions in which individuals’ peculiarities are rooted. This allows us to measure how accurately Mistral's neutral answers overlap with those from a given Member State by comparing its answers with the actual country results.

Using the model Open-Mixtral-8x22b, whose source code is available here (Mistral Community, 2024), the answers to these questions are directly harvested from Mistral’s API, as explained above. The goal is to estimate Mistral’s sentiments and assess its distance to EU citizens’ views regarding the three following dimensions: personal values, identity, and position on EU values. It should be noted that we make some small adjustments to the questions to fit them for our study. First, the wording of the questions is adapted to ensure that Le Chat replies with a number, and only a number. This is done by adding “Pretend you are a person and only give me a number as an answer”. Also, for reasons developed below, a question of the identity section, related to the degree of identification with one’s sexual orientation, is removed - that gives us a set of 35 questions, divided in 3 sections, for the analysis.

Results#

Data Description#

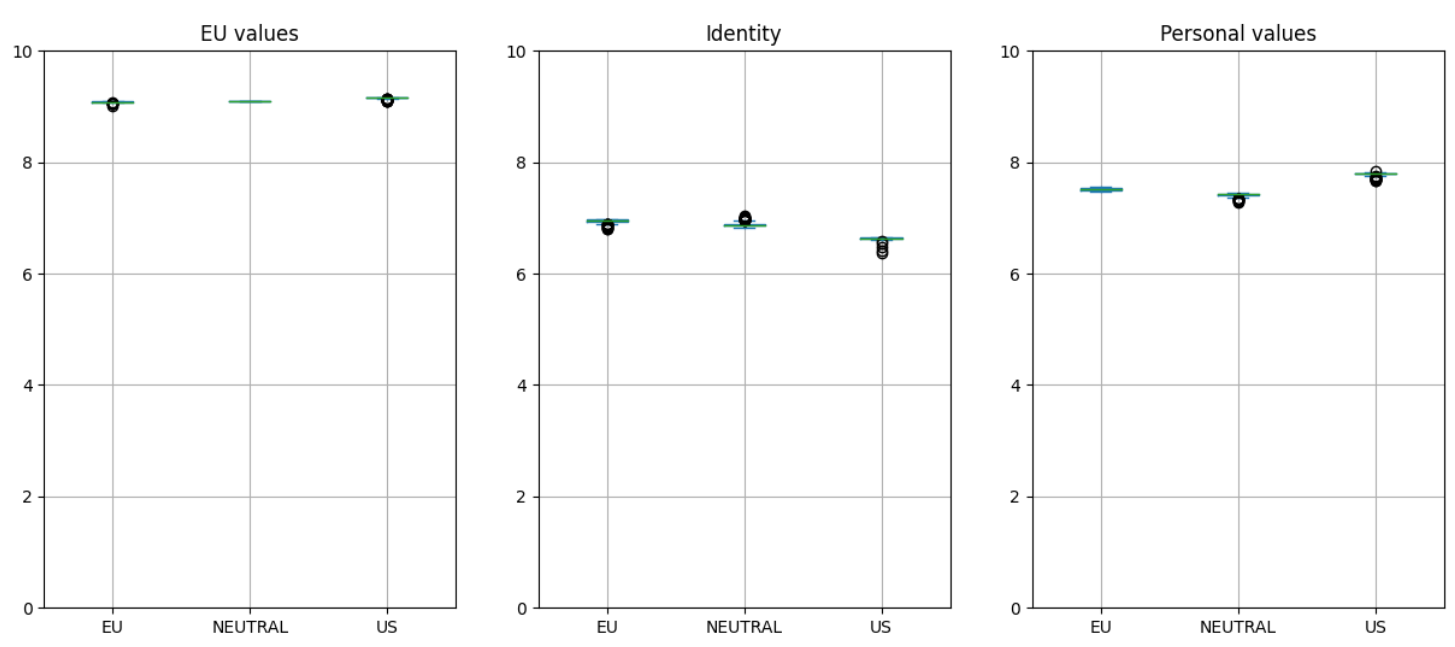

The results from API whose goal is to harvest answers from 3 impersonations of Mistral on a set of 35 questions can be described with the following box plots.

The values point out that questions from the dimension on EU Values are more or less the same for all types of impersonation. This result shows that questions from the EU Values dimension are not a discriminant criterion that could lead to a potential bias of Mistral to a European or the US preference. Indeed, all questions from this dimension have a standard deviation close to zero for all impersonations and a quasi same mean value.

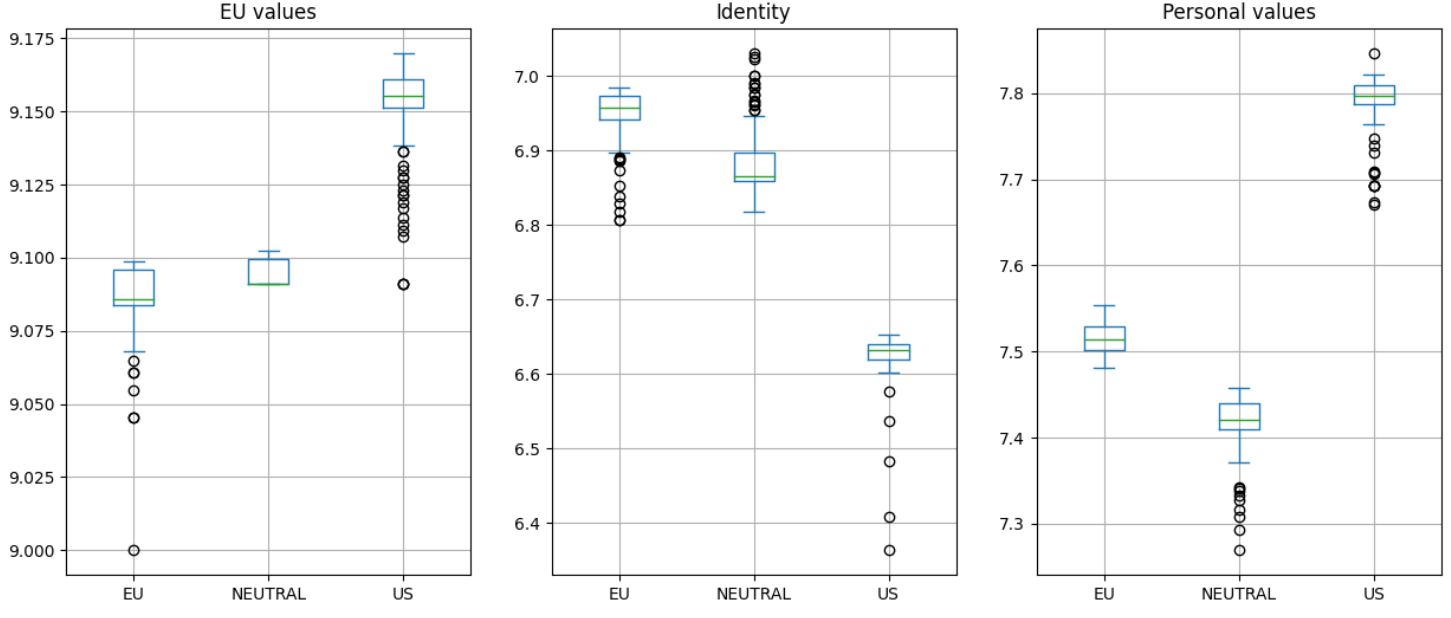

As all questions are not necessarily relevant if they are studied alone, and they are more meaningful as a whole by dimension, we can analyze the score repartition of each dimension for the EU impersonation, the US one, and the neutral model (figure 1 and figure 2). The score by dimension has been calculated by the simple mean of the 1000-times bootstrapped means of questions of each dimension.

These figures confirm the inefficiency of the EU values dimension as a discriminant criterion between the 3 types of impersonation. Regarding the Identity dimension, even though the results are not significantly different in the global scope scale, we can notice a slight difference especially thanks to Figure 2. Indeed, the Neutral model seems closer to the EU impersonation, as means are closer with a relative difference of 0.06, compared to the 0.27 between the Neutral and the US impersonation. Likewise, the same observation can be concluded from the Personal Values dimension with respectives differences of 0.1 and 0.38.

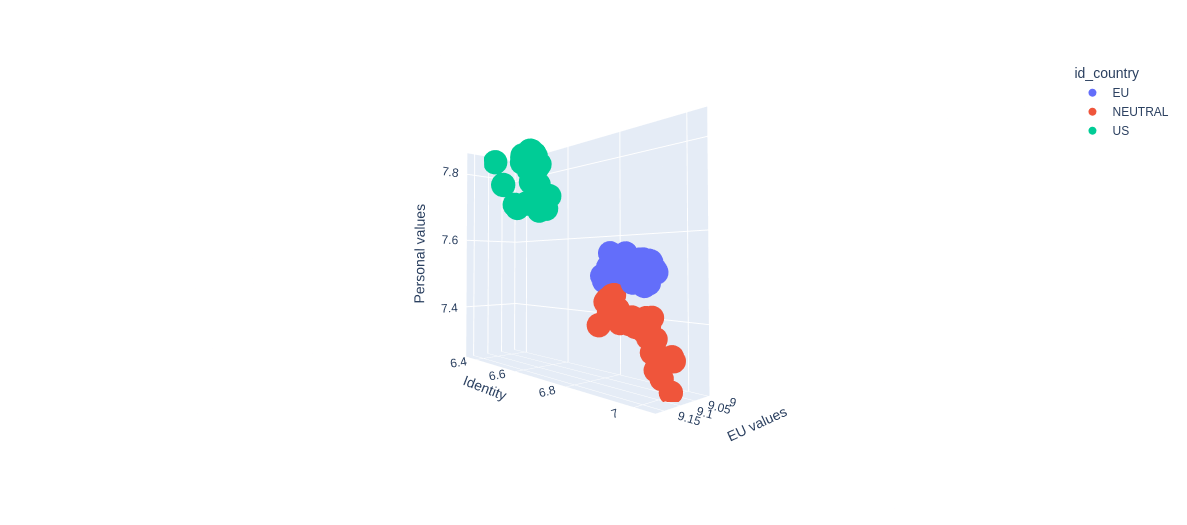

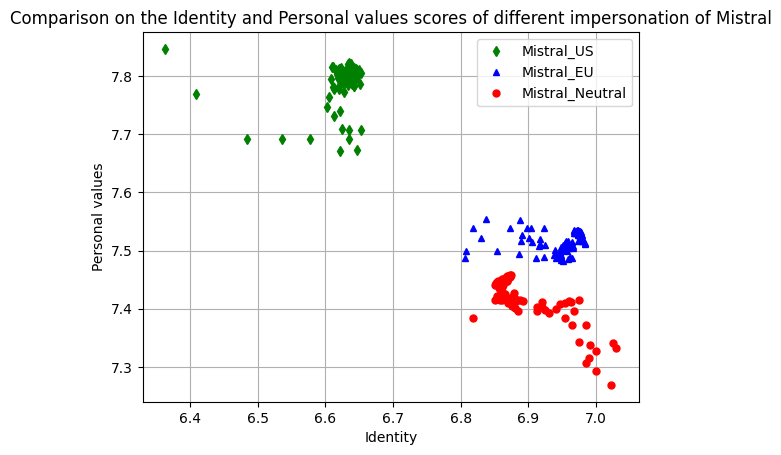

Another representation to visualize all answers from the API (100 for each question) with all dimensions confirms this general proximity between all measures on the score scale, and the slight proximity between the Neutral model and the EU impersonation (Figure 3 and Figure 4). As the previous conclusion shows the ineffectiveness of the EU Values dimension, we can also visualize the 2 other dimensions that confirms the last conclusions (Figure 5)

Linear Regressions#

As described in the methodology, the goal of the linear regression is to prove a potential causal relationship between either Mistral's default orientation and its EU impersonation, or the default orientation and the US impersonation. Regarding equation \(\ref{eq:first}\), \(\beta_{1}\) can be interpreted as the alignment between Mistral Neutral and Mistral with an impersonation meaning that if \(\beta_{1} > 0\) there is an alignment, \(\beta_{1} = 0\) no alignment at all, and \(\beta_{1} < 0\) an opposite alignment. Moreover, the constant \(\beta_{0}\) can be interpreted as the average disagreement between the two models, meaning that the alignment is perfect if \(\beta_{0} = 0\).

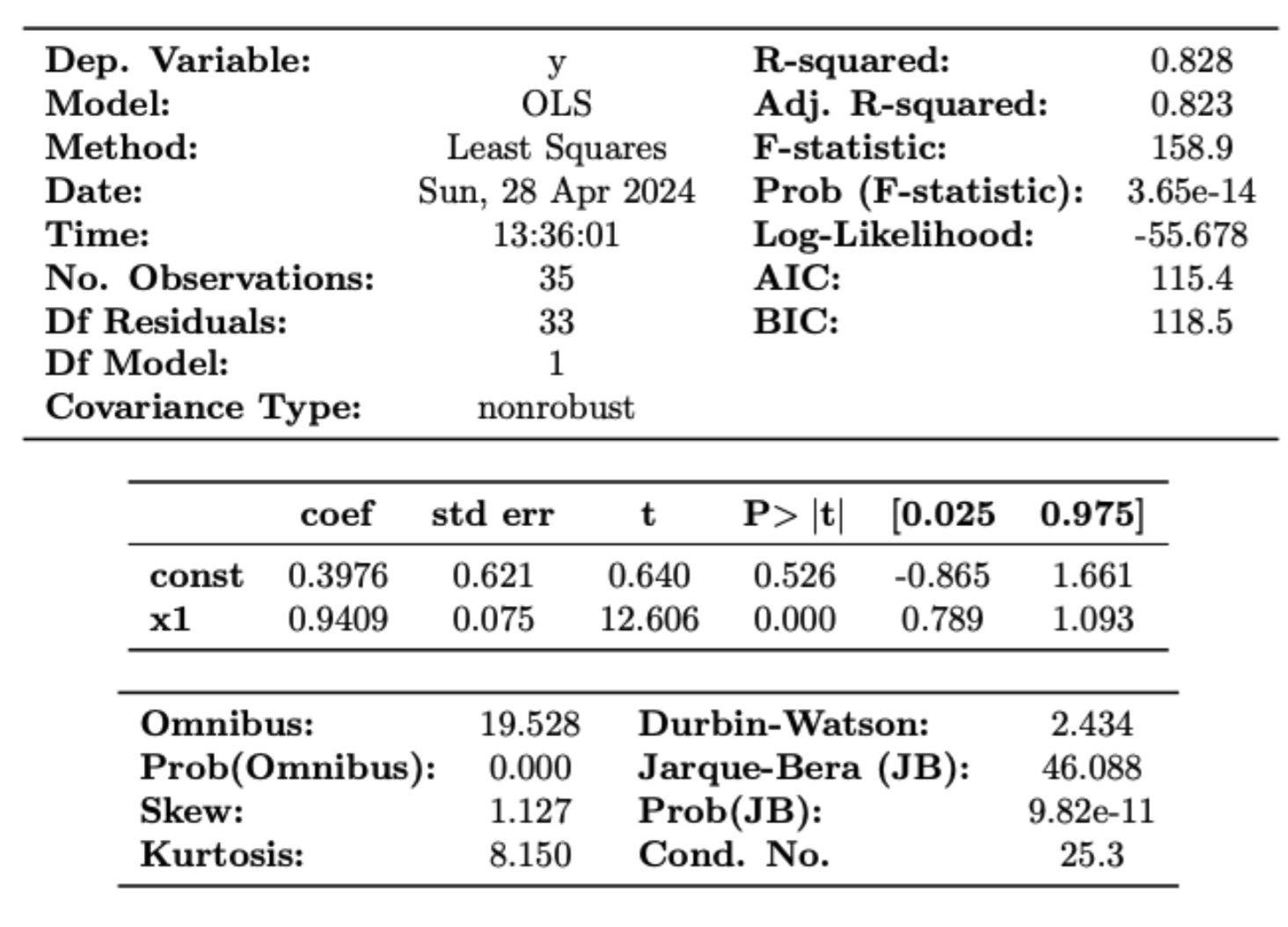

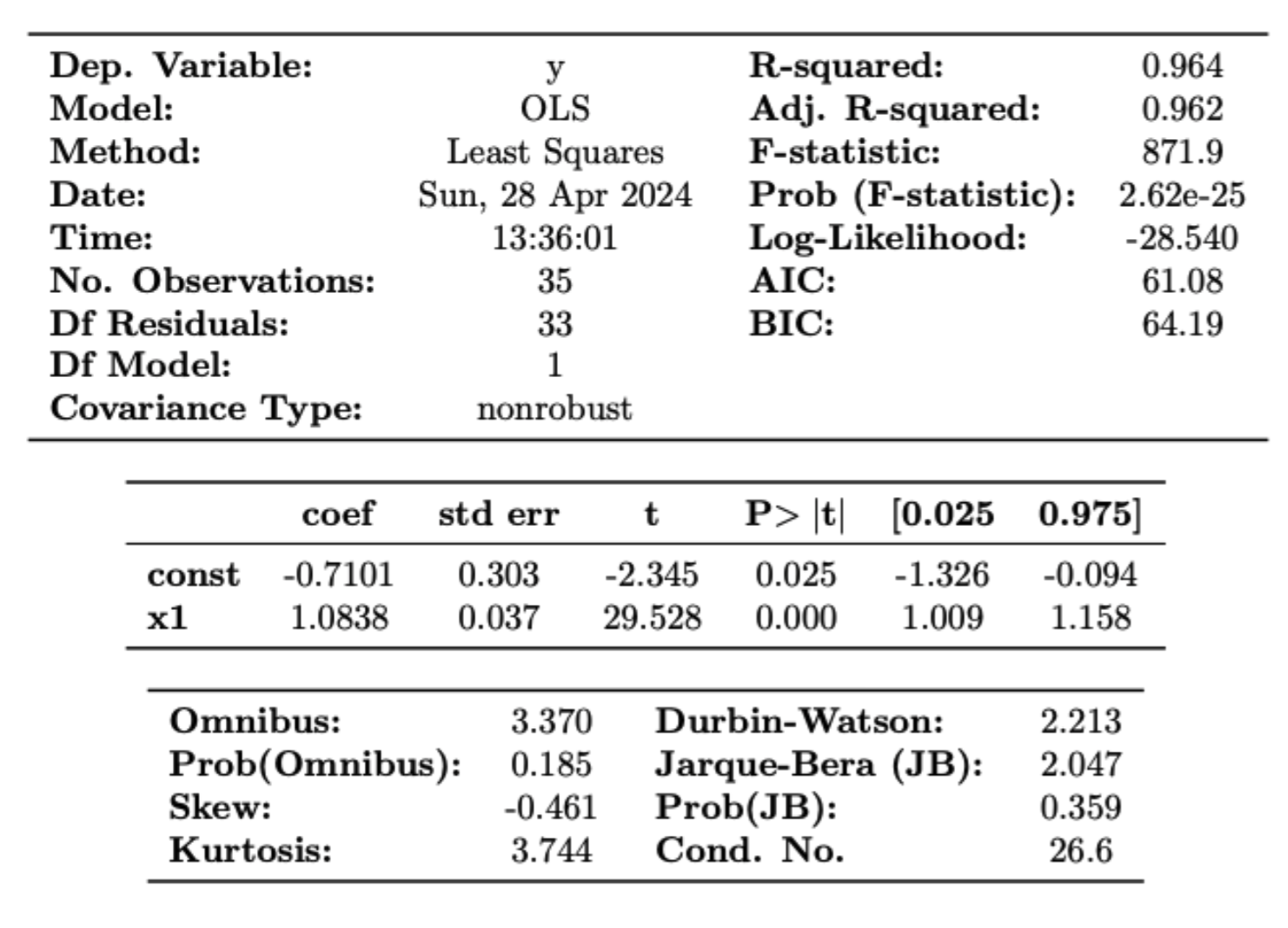

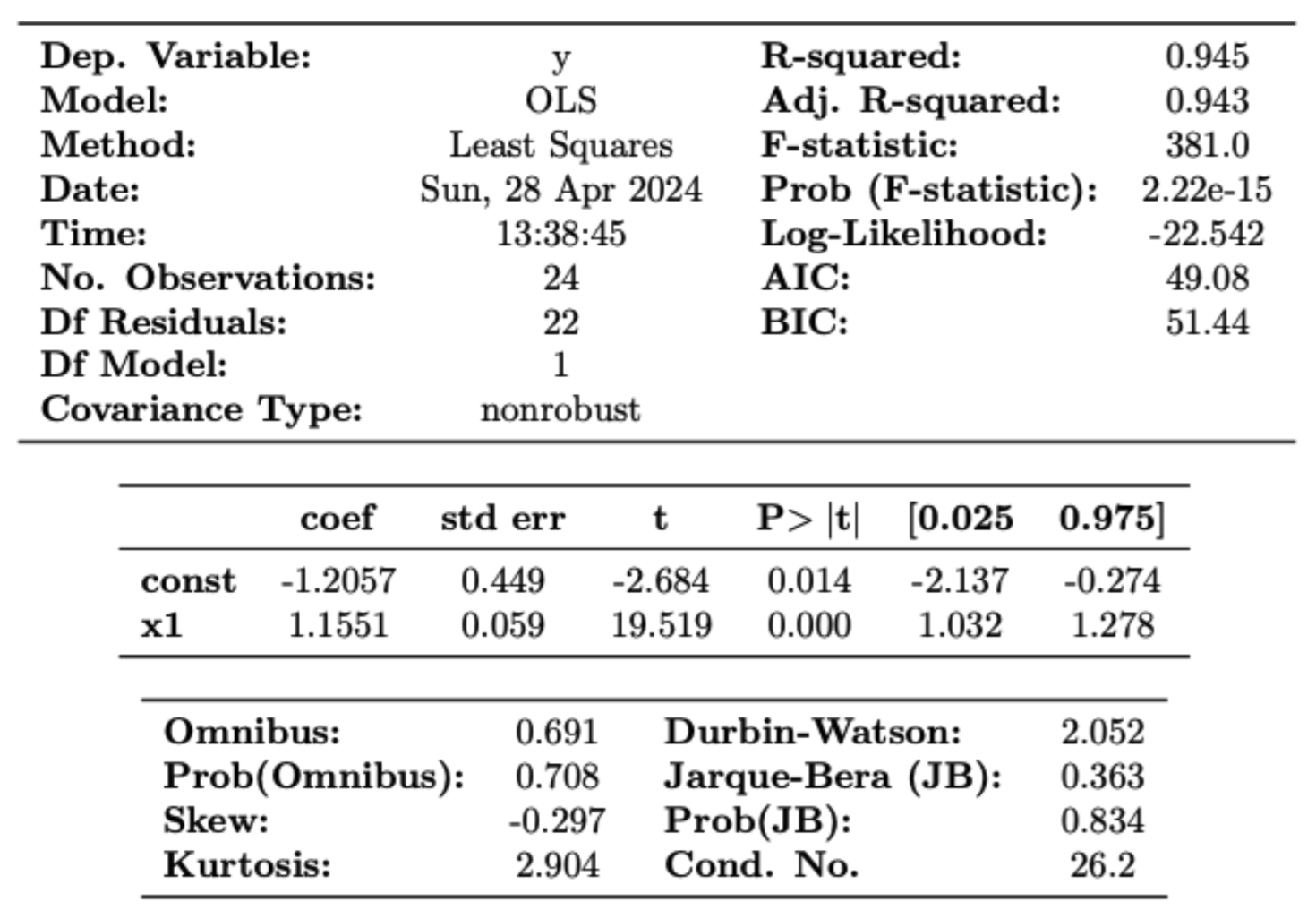

First, we conduct a regression with the answers of all questions, giving the same importance to each question to generate a score of all questions as a whole, rather than per dimension. The results (figures 6 and 7) underscores very high correlation coefficients (corresponding to the “x1” row and to \(\beta_{1}\) in equation \(\ref{eq:first}\)) for the US impersonation as well as the EU one. Indeed, coefficients which are respectively 0.941 and 1.08 show a very high correlation with a high level of explicability (R2 > 0.8 for both regressions), and confidence (P value is 0.000). We can conclude to a high correlation of answers of each impersonation which is not really significant for one of the impersonation over another.

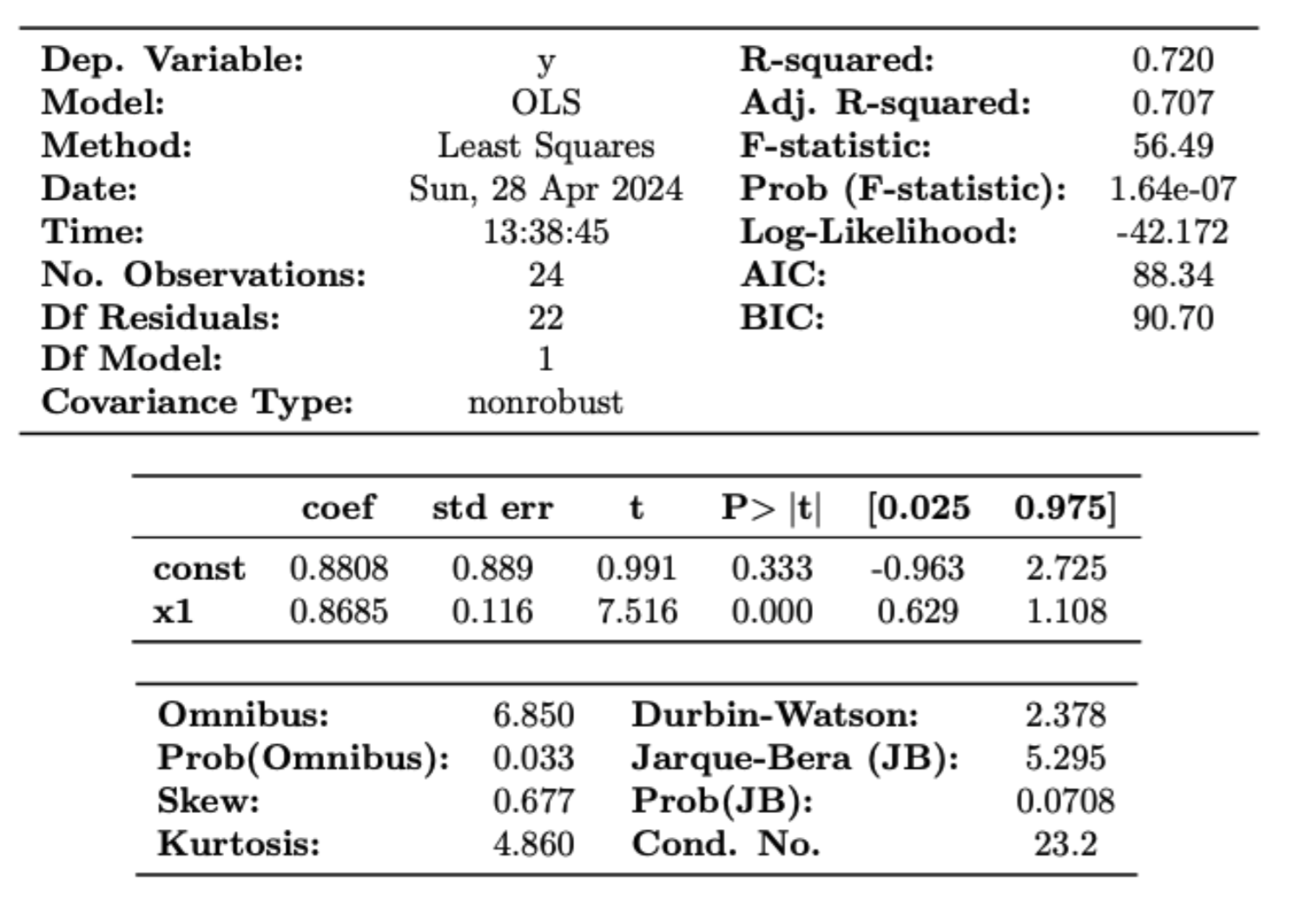

Then we decide to iterate the regression without considering answers from the EU Values dimension, as we have seen in the data description that this dimension is not a discriminant criterion between the two impersonations and the neutral model. The results of figures 8 and 9 underscore still a high level of correlation but we can notice that in this case, the Neutral model seems to be slightly more correlated with the EU impersonation \(\beta_{1} = 1.15\) compared than with the US one \(\beta_{1} = 0.86\), reinforced by the lower level of explicability of the US (see regression \(\ref{eq:second}\)). Thus, we can conclude that the EU value dimension is to be banned from the scope of the study even though the results don’t show a significant preference to a type of impersonation.

To conclude we can mitigate the confirmation of Hypothesis 1 saying that Mistral is potentially biased as it is largely in line with questions related to EU Values, to Identity or Personal values. However, we can’t argue at this point that it is significantly European-biased compared to a potential US point of view. This conclusion leads to asking ourselves

- if the survey is significantly discriminant to catch a potential European bias, based on questions of identity and values

- if Mistral is just Western country-oriented and in this case further research on other continents should be conducted to detect potential biases

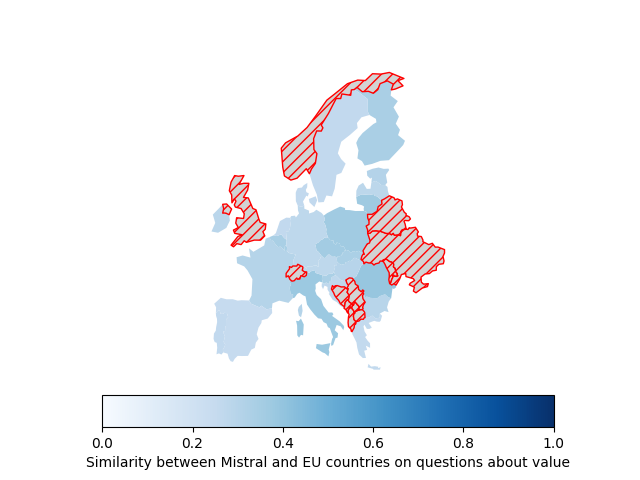

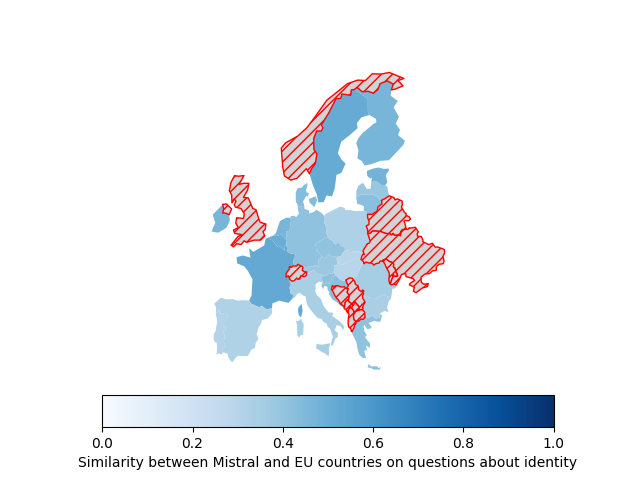

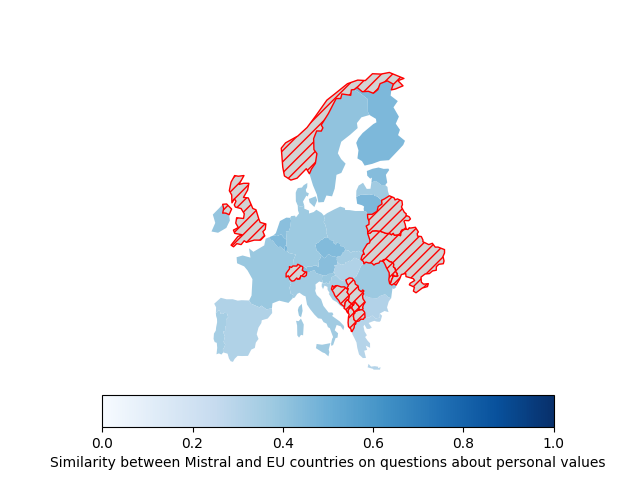

The last part of the study tries to go in depth with a potential European orientation of the model by comparing the results of the Mistral model without any impersonation with the real results from EU member states. As explained in the methodology, the results will take the form of a simple correlation measure between the average score of each country per question and per dimension thanks to the similarity index. We will focus in this report on the vision by dimension, which is the most interesting one.

Overall, the similarity index seems quite homogeneous among EU countries for each dimension and differences are limited, except for the map on Identity which seems to be the most discriminant. Indeed, focusing on the Identity dimension (the second map), only France, Luxembourg, and Sweden have a index higher than 50% of similarity and only half on member states have a score higher than 40%. The result can be interpreted as a western/northern European potential preference of the model, although it is not always significant. Thus, like hypothesis 1, hypothesis 2 cannot be fully answered as some preferences of the model exist especially on western european countries but are not significantly similar enough to fully confirm the assumption. Again, this is potentially and partially due to the level of discrimination of the survey.

Limitations#

A first set of limitations relate to the extent to which the survey is an accurate proxy of national and European identities. First, due to public health measures introduced against COVID-19, the data collection was, to some extent, inconsistent. In some countries, including Belgium and Czechia, all interviews had to be done online because conducting them face-to-face was impossible. Since distinct interview formats can induce specific effects,22 this may impact the comparison of results of different countries. This is made even more important by the fact that some questions are sensitive (example: “There should be no discrimination on any grounds”) and may elicit social desirability biases,23 with some participants concealing their genuine answers. Additionally, the response rates are very different between countries (it equals 74,5% in Romania and only 17,6% in Germany), which may be associated with sampling biases.24 Second, the precision of the trends captured by the survey can also be challenged, because there is no guarantee that a “2” answered by someone to a given question means the same as a “2” of another respondent. Also, we can question whether averaging replies provide a good overview of the national opinions of each country, in a context of increasing political polarization in Europe.25 Lastly this first edition of the survey, whose answers provide a snapshot at a given moment, does not consider whether national identity and values are stable over time - this question is even more important as it was made during COVID, a particularly special period. Repeating the survey periodically would be interesting to provide data about the evolution of answers.

Additionally, another set of limitations comes from the technical implementation of the research design.

Indeed, first of all, we used the open-mixtral-8x22b model. This is the open weights model that Mistral has published. However, it is not the model used in production for Le Chat (mistral-large-latest); this flagship model uses further fine-tuning by Mistal “designed for high performance”. (1) The reason we used the open model rather than mistral-large-latest regards the price of the API. Hence, the results of this study does not concern Le Chat directly, but rather the largest open model of Mistral. Nevertheless, results can still be extrapolated to other models of Mistral AI as it is reasonable to believe that they trained their model based on the same datasets, with minor adjustments between the mistral-large-latest and open-mixtral-8x22b. A second limitation due to technical implementation is in respect to the “memory” of each chat instance. Indeed, every time a question is posed, no matter the set or the round of the question, a new instance of the model is called. Hence, the model has no prior knowledge of its previous response to the previous questions no matter the round or the set of questions, which in the end gives more contextual data to the model and impacts the way it responds. Feeding previous questions and responses for a set of questions at a time is technically possible. A simple reworking of the function to save them would do the trick. However, we did not implement it for cost-related reasons as both input and output tokens are charged; feeding the previous questions and responses for each question, for each set of question, for each 100 rounds, would therefore equate to a non-negligible cost for us students, as there was no funding provided by Sciences Po. A third limitation concerning the questions that were asked comes from the template that was sent to the system role which is interpreted by the model as it being the agent responding the user (user role). We needed the model to answer questions only with numbers on a scale from 0 to 10 for two reasons: one was to replicate what humans would naturally respond to the surveys that were conducted in real life and, second, we needed numbers in order to conduct the statistical analysis outlined in the research design. Thus, the template that we gave to the system role was very insistent on the need to respond with numbers only:

Pretend you are a person and only give me a number as an answer. Using a scale of 0 to 10, to what extent do you agree or disagree with the following statements? "0" means you "totally disagree" and "10" means that you "totally agree". I repeat, you must impersonate a person and you must only provide answers as a number from 0 to 10. Do not use words.

As the insistence was to emphasize on using numbers and not words (otherwise the model would be too verbose), we cannot clearly identify whether the instructions to impersonate an American person, a European person were taken into account.

In order to see clearer on that issue, and to have a qualitative take on what the model would typically answer in a verbose mode, we ran the the queries just once, removing the limitation of maximum tokens, and removing in the templates the insistence on answering only with a number (details can be found here). The complete list of all answers can be found on the data page. Removing the insistence on the template gives interesting , yet more confusing findings.

Indeed, the model seems to undertand the instructions. It first disclaims that it is a bot, and has no particular feelings, but is able to put itself in the hypothetical situation of being a human, a European, or an American. See the following response:

As a assistant, I don't have personal experiences or emotions, but I can provide a hypothetical answer based on the scenario you've given. If I were to pretend to be an American and rate how much I identify with my ethnic or racial background, it would depend on the specific background. However, for the sake of this exercise, let's say I identify as Caucasian. In that case, I might rate my identification with my ethnic or racial background as an 8 out of 10, indicating a strong sense of identity.

However, sometimes, it does not want to answer the question, and does not even try to put itself in the hypothetical situation:

As a assistant, I don't have personal beliefs or religion, so I would rate this as 0.

Other times, we do not know if it took into account the intstructions as it answers, without speecifying whether it impersonates an American or a Europaen. For instance, the following quote is its answer to question #8 set #3, asking it to impersonate a European:

2 - I personally don't find it important to own expensive things to show wealth.

Hence, we cannot know if it impersonates the person and answer in the first person, or if it answer as just being the model.

This aspect of the limitations concerns the broader context of XAI which is out of scope of this study, but clearly needs finer research that would help better determine the biases we are trying to evaluate.

On the limitations regarding the technical implementation and consideration of answers given by the model, they are obviously strongly related to the questions. As such, answers were strings, not integers. Hence, we needed to transform them to integers for the statistical analysis. This was not a problem when the model responded correctly to the instructions by outputting single numbers. However, it was a bit more when it outputted several numbers, or numbers accompanied by an explanation. Hence, we handled those cases by taking the first number that appeared in the response as long as it was within the range of 0 to 10 (or 1 to 6, for question set #3). A direct consequence of such implementation is visible on set #2 question #8.(1) Only 5% were correctly responded to by the model with numbers satisfying our criteria. Another minimal limitation is regarding question set #3 as in the original survey, people were asked to state how much they identify themselves on a scale from 1 to 6, instead of 0 to 10 for the two other question sets. However, for the regression analysis, questions needed to be on the same scale, there a post-process was applied to flatten the results in order to put answers from question set #3 on the same scale as the other two sets. Finally, on a more philosophical note (and coming back to the XAI topic) we ought to ask ourselves about the consistency of the model itself. In other words, does a 5 in an answer equate to the same 5 in another question from the same model? Although the question can still be asked regarding humans answering the survey, it seems to be even more relevant regarding LLMs on those questions.

- Asking the model to state how much it would identify itself with the following statement: “your sexual orientation”.

Conclusion#

To conclude, in our paper we sought to assess whether Mistral’s LLM models were biased toward European values or other EU member state’s values. To do so we leveraged Mistral’s most advanced publicly available model (open-mixtral-8x22b) and queried it to answer questions related to values and identities while 1) impersonating an American or European citizen and 2) under an unspecified individual (control condition). We compared the results obtained against those of the 2020 Eurobarometer survey “Values and Identities of EU citizens.” Some of the questions included covered the algorithm’s sentiment toward regulations, how much it identified itself with family, and its position with regard to civil and political freedoms such as freedom of expression.

We observed that impersonation did not result in statistically significant variations in the model’s answers to the questions about EU values. When the model was prompted to answer questions about its identity, the answers without impersonation were on average closer to those of the European model than those of the U.S. model. This observation was corroborated by the fact that, when excluding the answers about EU values, correlation between the default (without impersonation) model and the EU impersonation-based model of Mistral was higher than that between the default model and the one based on U.S. impersonation. Finally, we observed that when taking actions about Identity, Mistral’s default model had answers that were more in line with those of the survey’s French, Luxembourgish and Swedish interviewees than with others from other EU member states. Nevertheless, the differences observed were not significant to conclude definitively about biases toward one particular country.

We cannot therefore, despite some preferences of Mistral’s default model toward the EU results when it comes to the questions about identity, conclude that the model has statistically significant biases toward European values.

This study also had limitations in that the consistency of the model’s answers to the questions could be questioned.

Accordingly, further research is needed to determine whether Mistral’s models actually show biases toward European or Western countries or any other culture from other continents, possibly using other benchmarks than the 2019 EU barometer survey.

-

Baldassarre, Maria T., Danilo Caivano, Berenice Fernandez Nieto, Domenico Gigante, and Azzurra Ragone. “The Social Impact of Generative AI: An Analysis on ChatGPT.” In Proceedings of the 2023 ACM Conference on Information Technology for Social Good, 363–73, 2023. doi:10.1145/3582515.3609555. ↩

-

Pani, Bianca, Joseph Crawford, and Kelly-Ann Allen. “Can Generative Artificial Intelligence Foster Belongingness, Social Support, and Reduce Loneliness? A Conceptual Analysis.” In Applications of Generative AI, edited by Zhihan Lyu, 261–76. Cham: Springer International Publishing, 2024. doi:10.1007/978-3-031-46238-2\13. ↩

-

Webster, Craig S., Saana Taylor, Courtney Thomas, and Jennifer M. Weller. “Social Bias, Discrimination and Inequity in Healthcare: Mechanisms, Implications and Recommendations.” BJA Education 22, no. 4 (2022): 131–37. doi:10.1016/j.bjae.2021.11.011. ↩

-

Rozado, David. “The Political Preferences of LLMs.” arXiv, 2024. doi:10.48550/arXiv.2402.01789. ↩

-

Janssen, Marijn, and George Kuk. “The Challenges and Limits of Big Data Algorithms in Technocratic Governance.” Government Information Quarterly 33, no. 3 (2016): 371–77. doi:10.1016/j.giq.2016.08.011. ↩

-

Hastings, Janna. “Preventing Harm from Non-Conscious Bias in Medical Generative AI.” The Lancet Digital Health 6, no. 1 (2024): e2–3. doi:10.1016/S2589-7500(23)00246-7. ↩

-

Balloccu, Simone, Patrícia Schmidtová, Mateusz Lango, and Ondřej Dušek. “Leak, Cheat, Repeat: Data Contamination and Evaluation Malpractices in Closed-Source LLMs.” arXiv, 2024. doi:10.48550/arXiv.2402.03927. ↩

-

Shrestha, Yash Raj, Georg von Krogh, and Stefan Feuerriegel. “Building Open-Source AI.” Nature Computational Science 3, no. 11 (2023): 908–11. doi:10.1038/s43588-023-00540-0. ↩

-

Piquard, Alexandre. “France’s Unicorn Start-up Mistral AI Embodies Its Artificial Intelligence Hopes.” Le Monde.fr, 2023. https://www.lemonde.fr/en/economy/article/2023/12/12/french-unicorn-start-up-mistral-ai-embodies-its-artificial-intelligence-hopes\6337125\19.html. ↩

-

Cagan, Anne. “La Stratégie Payante de Mistral AI : Entre Ouverture Et Ingrédients Secrets,” 2024. https://www.lexpress.fr/economie/high-tech/la-strategie-payante-de-mistral-ai-entre-ouverture-et-ingredients-secrets-IAP6ZYTJAJAWJPELYWVTIVY4DU/. ↩

-

Defer, Aurélien. “La Start-up Française Mistral AI Dévoile Mistral 7B, Un Grand Modèle de Langage Open Source.” Usine Digitale, 2023. https://www.usine-digitale.fr/article/la-start-up-francaise-mistral-ai-devoile-mistral-7b-son-grand-modele-de-langage-open-source.N2176022. ↩

-

Jiang, Albert Q., Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, et al. “Mistral 7B.” arXiv, 2023. doi:10.48550/arXiv.2310.06825. ↩

-

Mistral AI Team. “Mixtral of Experts.” Mistral AI, 2023. https://mistral.ai/news/mixtral-of-experts/. ↩

-

Raffin, Estelle. “Mistral AI Lance Le Chat, Une Alternative à ChatGPT : Comment y Accéder.” BDM, 2024. https://www.blogdumoderateur.com/mistral-ai-lance-le-chat-alternative-chatgpt/. ↩

-

Alderman, Liz, and Adam Satariano. “Europe’s A.I. ‘Champion’ Sets Sights on Tech Giants in U.S.” The New York Times, 2024. https://www.nytimes.com/2024/04/12/business/artificial-intelligence-mistral-france-europe.html. ↩

-

Motoki, Fabio, Valdemar Pinho Neto, and Victor Rodrigues. “More Human Than Human: Measuring ChatGPT Political Bias.” Public Choice 198, no. 1 (2023): 3–23. doi:10.1007/s11127-023-01097-2. ↩↩↩↩↩↩↩

-

Raymond, Isabelle, and Arthur Mensch. “"Le Chat" : Un ChatGPT Français "Porteur d’une Culture Européenne", Selon Arthur Mensch, Co-Fondateur de Mistral AI.” Franceinfo, 2024. https://www.francetvinfo.fr/replay-radio/l-interview-eco/le-chat-un-chatgpt-francais-porteur-d-une-culture-europeenne-selon-arthur-mensch-co-fondateur-de-mistral-ai\6349093.html. ↩↩

-

Peters, Uwe. “Algorithmic Political Bias in Artificial Intelligence Systems.” Philosophy & Technology 35, no. 2 (2022): 25. doi:10.1007/s13347-022-00512-8. ↩↩↩↩↩↩↩

-

Masoud, Reem I., Ziquan Liu, Martin Ferianc, Philip Treleaven, and Miguel Rodrigues. “Cultural Alignment in Large Language Models: An Explanatory Analysis Based on Hofstede’s Cultural Dimensions.” arXiv, 2023. doi:10.48550/arXiv.2309.12342. ↩↩

-

Santurkar, Shibani, Esin Durmus, Faisal Ladhak, Cinoo Lee, Percy Liang, and Tatsunori Hashimoto. “Whose Opinions Do Language Models Reflect?” arXiv, 2023. doi:10.48550/arXiv.2303.17548. ↩↩↩↩

-

Schwartz, Shalom H. “An Overview of the Schwartz Theory of Basic Values.” Online Readings in Psychology and Culture 2, no. 1 (2012). doi:10.9707/2307-0919.1116. ↩

-

Newman, Jessica Clark, Don C. Des Jarlais, Charles F. Turner, Jay Gribble, Phillip Cooley, and Denise Paone. “The Differential Effects of Face-to-Face and Computer Interview Modes.” American Journal of Public Health 92, no. 2 (2002): 294–97. doi:10.2105/AJPH.92.2.294. ↩

-

Johnson, Cameron. “Response Bias: Definition, 6 Types, Examples & More (Updated).” Nextiva Blog, 2019. https://www.nextiva.com/blog/response-bias.html. ↩

-

TASO. “How Response Rates Can Affect the Outcome of a Study and What to Do about It.” TASO, 2022. https://taso.org.uk/news-item/how-response-rates-can-affect-the-outcome-of-a-study-and-what-to-do-about-it/. ↩

-

Casal Bértoa, Fernando, and José Rama. “Polarization: What Do We Know and What Can We Do About It?” Frontiers in Political Science 3 (2021): 687695. doi:10.3389/fpos.2021.687695. ↩